Some more tweaks to my Python script

Update: you can find the outcome of all this in a latter post: Project BHS

All the comments to my previous post have provided me with hints to increase further the efficiency of a script I am working on. Here I present the advices I have followed, and the speed gain they provided me. I will speak of “speedup”, instead of timing, because this second set of tests has been made in a different computer. The “base” speed will be the last value of my previous test set (1.5 sec in that computer, 1.66 in this one). A speedup of “2” will thus mean half an execution time (0.83 s in this computer).

Version 6: Andrew Dalke suggested the substitution of:

line = re.sub('>','<',line)

with:

line = line.replace('>','<')

Avoiding the re module seems to speed up things, if we are searching for fixed strings, so the additional features of the re module are not needed.

This is true, and I got a speedup of 1.37.

Version 7: Andrew Dalke also suggested substituting:

search_cre = re.compile(r'total_credit').search

if search_cre(line):

with:

if 'total_credit' in line:

This is more readable, more concise, and apparently faster. Doing it increases the speedup to 1.50.

Version 8: Andrew Dalke also proposed flattening some variables, and specifically avoiding dictionary search inside loops. I went further than his advice, even, and substituted:

stat['win'] = [0,0]

loop

stat['win'][0] = something

stat['win'][1] = somethingelse

with:

win_stat_0 = 0

win_stat_1 = 0

loop

win_stat_0 = something

win_stat_1 = somethingelse

This pushed the speedup futher up, to 1.54.

Version 9: Justin proposed reducing the number of times some patterns were matched, and extract some info more directly. I attained that by substituting:

loop:

if 'total_credit' in line:

line = line.replace('>','<')

aline = line.split('<')

credit = float(aline[2])

with:

pattern = r'total_credit>([^<]+)<';

search_cre = re.compile(pattern).search

loop:

if 'total_credit' in line:

cre = search_cre(line)

credit = float(cre.group(1))

This trick saved enough to increase the speedup to 1.62.

Version 10: The next tweak was an idea of mine. I was diggesting a huge log file with zcat and grep, to produce a smaller intermediate file, which Python would process. The structure of this intermediate file is of alternating lines with “total_credit” then “os_name” then “total_credit”, and so on. When processing this file with Python, I was searching the line for “total_credit” to differentiate between these two lines, like this:

for line in f:

if 'total_credit' in line:

do something

else:

do somethingelse

But the alternating structure of my input would allow me to do:

odd = True

for line in f:

if odd:

do something

odd = False

else:

do somethingelse

odd = True

Presumably, checking falsity of a boolean is faster than matching a pattern, although in this case the gain was not huge: the speedup went up to 1.63.

Version 11: Another clever suggestion by Andrew Dalke was to avoid using the intermediate file, and use os.popen to connect to and read from the zcat/grep command directly. Thus, I substituted:

os.system('zcat host.gz | grep -F -e total_credit -e os_name > '+tmp)

f = open(tmp)

for line in f:

do something

with:

f = os.popen('zcat host.gz | grep -F -e total_credit -e os_name')

for line in f:

do something

This saves disk I/O time, and the performance is increased accordingly. The speedup goes up to 1.98.

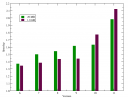

All the values I have given are for a sample log (from MalariaControl.net) with 7 MB of gzipped info (49 MB uncompressed). I also tested my scripts with a 267 MB gzipped (1.8 GB uncompressed) log (from SETI@home), and a plot of speedups vs. versions follows:

Notice how the last modification (avoiding the temporary file) is of much more importance for the bigger file than for the smaller one. Recall also that the odd/even modification (version 10) is of very little importance for the small file, but quite efficient for the big file (compare it with Version 9).

The plot doesn’t tell (it compares versions with the same input, not one input with the other), but my eleventh version of the script runs the 267 MB log faster than the 7 MB one with Version 1! For the 7 MB input, the overall speedup from Version 1 to Version 11 is above 50.

Aaron said,

February 20, 2008 @ 7:54 am

Just a quick grammar note: “suggested to substitute” is not correct English. I would instead use “suggested substituting” or “suggested the substitution”. Gerunds vs infinitives can be a bit tricky.

isilanes said,

February 20, 2008 @ 9:04 am

Point taken!

Justin said,

February 20, 2008 @ 13:27 pm

try:

cre = search_cre(line)

if cre:

credit = float(cre.group(1))

The other thing I talked about, you can do

cpattern = r’total_credit>(?P<cre>[^<]+)(?P<os>[^<]+)<‘

pattern = “(%s)|(%s)” % (cpattern, opattern)

and then just match on that, and use groupdict()

Justin said,

February 20, 2008 @ 13:28 pm

grr, it ate the comment again :(

isilanes said,

February 20, 2008 @ 13:43 pm

@Justin(3): I will try your first suggestion, but it is not guaranteed that it saves time. In Version 9 maybe it could, but probably not in Version 10+. Right now it is testing a boolean 100% of the times, plus re.search an additional 50% of the times. What you propose is to remove one of the two tests (the boolean), and do the re.search the 100% of the times. If re.search and boolean are equally fast, your solution is a brilliant way of speeding things up by a 50% (to 100%, down from 150%). But the question is whether 2 boolean tests are slower than one re.search. Empiric tests will tell…

The second suggestion, I need time to digest the RE :^)

@all: I am learning a lot from your comments, folks. Thanks!

isilanes said,

February 20, 2008 @ 14:00 pm

@Justin(3): I just tried your first suggestion, and it seems to be a bit slower, not faster (Probably for the reasons I give in (5) ).

Justin said,

February 20, 2008 @ 17:03 pm

It would have probably been faster if you weren’t pre-filtering the lines…

I made a post here http://www.bouncybouncy.net/ramblings/posts/regex_with_named_groups/

explaining what I was trying to say in the comments :)

PJ said,

February 26, 2008 @ 20:41 pm

How about posting the full text of v11 ?

isilanes said,

February 26, 2008 @ 20:56 pm

“How about posting the full text of v11 ?”

Thanks for pointing out… I will.

Project BHS | handyfloss said,

March 13, 2008 @ 20:49 pm

[…] outlined in some previous posts[1,2,3,4], I have been playing around with a piece of Python code to process some log files. The log files […]

isilanes said,

March 13, 2008 @ 20:54 pm

@PJ: I just posted the full code of the last version. It is available at my home page, as well as commented in a new post.